AI Mentor

Check Your IQ

Free Expert Demo

Try Test

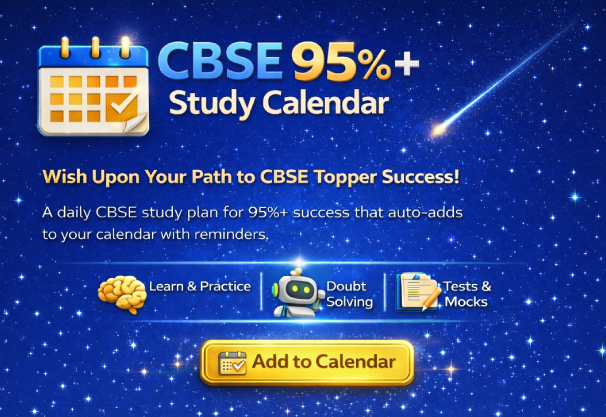

Courses

Dropper NEET CourseDropper JEE CourseClass - 12 NEET CourseClass - 12 JEE CourseClass - 11 NEET CourseClass - 11 JEE CourseClass - 10 Foundation NEET CourseClass - 10 Foundation JEE CourseClass - 10 CBSE CourseClass - 9 Foundation NEET CourseClass - 9 Foundation JEE CourseClass -9 CBSE CourseClass - 8 CBSE CourseClass - 7 CBSE CourseClass - 6 CBSE Course

Offline Centres